Grok Imagine Image Quality

Grok Imagine Image Quality is xAI's quality-focused image generation and editing model. It is designed for higher realism, stronger multilingual text rendering, tighter prompt following, deeper scene understanding, and more consistent brand-oriented output across both text-to-image and image editing workflows.

Complete technical specification for integration

Ready-to-use code snippets for common workflows

Step-by-step tutorials for advanced use cases

← All GuidesGenerating images with accurate, readable text

How to generate images with accurate, readable text. Covers prompt techniques for text placement, multilingual rendering, and practical use cases.

Introduction

Text rendering is one of the weakest areas of image generation models. Most diffusion-based systems treat text as visual texture rather than linguistic content. They recognize that letters look a certain way, but they don't understand spelling, word boundaries, or character order. The result is often garbled or misspelled text, making these models unreliable for any use case where words need to be readable.

This model takes a different approach. It includes built-in text rendering that produces images where text is accurately spelled and properly integrated into the scene. Whether you need a brand name on a product label or a headline across a banner, the text will match what you asked for, character by character.

A premium kraft paper coffee bag with the brand name "NORDIC ROAST" printed in matte black serif font, with "Single Origin • Ethiopia" as a smaller subtitle below, sitting on a rustic wooden surface with scattered coffee beans, natural window lighting, shallow depth of field, product photography

This guide covers the prompting techniques that work best, why they work, and practical examples for marketing, packaging, signage, and image editing.

Prompting for accurate text

Text rendering is controlled entirely through your prompt. There are no text layers, font selectors, or bounding boxes. The more explicit you are about what text should appear, where it goes, and how it looks, the more accurate the output will be.

The following techniques are listed in order of importance. Quoting is non-negotiable. Placement and style are refinements that improve quality further.

Quoting the exact text

This is the most important technique for reliable text rendering. Always wrap your desired text in quotation marks within the prompt. Quotes serve as a delimiter that tells the model: this exact string is not a description, it's literal content that should be rendered character by character.

Without quotes, the model treats the text as part of the scene description. It understands the intent (a sign about a latte special) but fills in its own wording, which often results in misspellings, nonsense characters, or text that drifts from what you wrote.

The first image uses a prompt with explicit quoted text. The second leaves the wording up to the model, which often results in garbled or missing characters.

A coffee shop chalkboard sign that reads "Today's Special: Oat Milk Latte $4.50", white chalk lettering on dark green board, cozy cafe interior background, warm lighting

A coffee shop chalkboard sign advertising an oat milk latte special, white chalk lettering on dark green board, cozy cafe interior background, warm lighting

Look at the first image above: the quoted prompt gets every character right, including the apostrophe in "Today's" and the "$4.50" price. The second image uses no quotes, and the model just guesses at the wording. You'll usually end up with garbled text or copy that doesn't match what you intended.

Specifying placement and style

Now that you know how to get the right words into the image, the next question is where they go and how they look. If your prompt doesn't say anything about placement or style, the model makes those choices for you. It might center the text when you wanted it at the top, or render it in a thin weight when you needed bold. Specifying these details in the prompt gives you control over the final typography.

Think of it as directing a designer rather than using a tool. You wouldn't just say "put some text somewhere". You'd specify the position, size, weight, and color. The model responds to the same level of direction:

- Position: "centered at the top", "on the bottom third", "across the storefront window"

- Style: "bold sans-serif lettering", "handwritten cursive", "embossed gold text"

- Size: "large headline", "small subtitle text", "filling the entire frame"

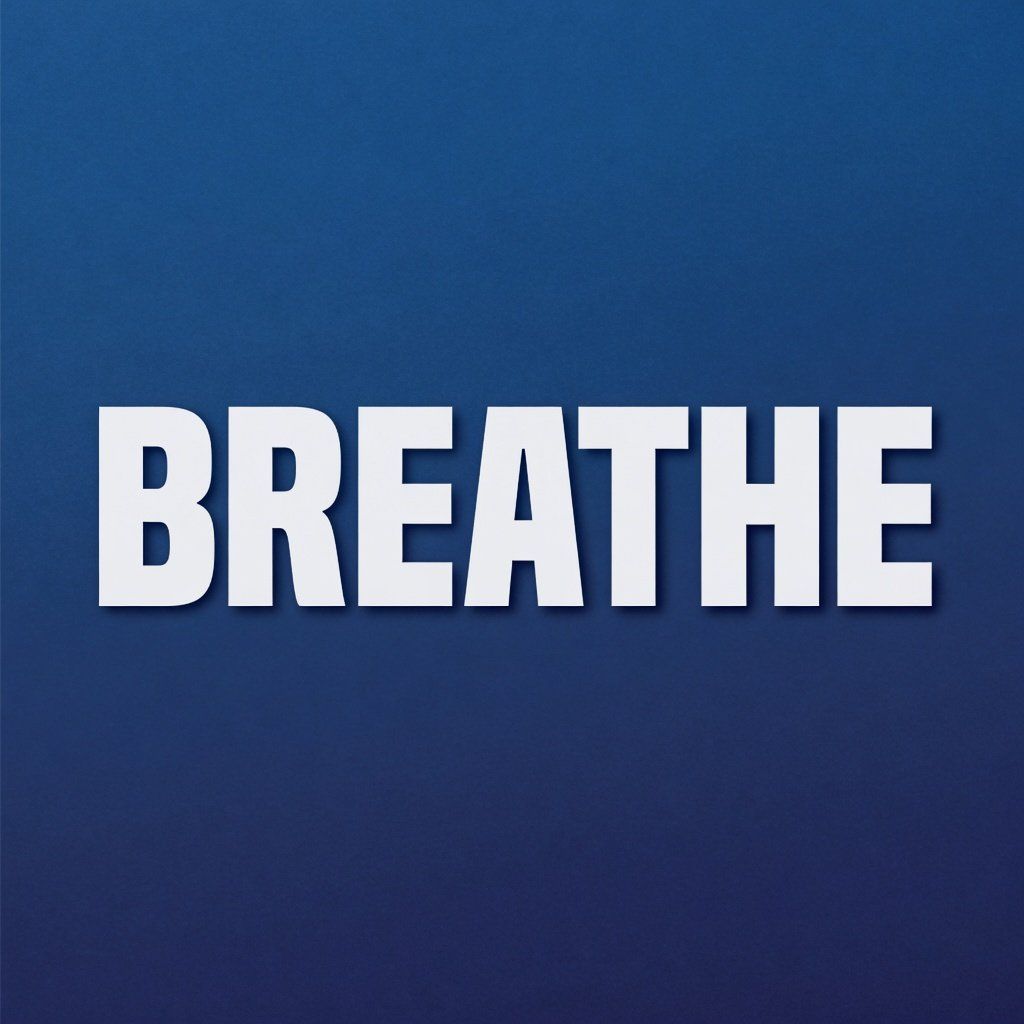

A minimalist poster with large bold white text "BREATHE" centered on a deep blue gradient background, clean sans-serif typography, modern graphic design, print-ready quality

A neon sign reading "OPEN 24 HRS" glowing in pink and blue on a dark brick wall at night, realistic neon tube lighting, slight glow reflection on wet pavement below

A good pattern is: scene context first, then text content and placement last. Establish the environment (a minimalist poster on a blue background) before introducing the text that needs to be placed into it. This sequencing tends to produce more coherent results.

Multilingual text

The model supports text rendering across Latin, CJK, Arabic, Cyrillic, and other scripts. If you're building localized marketing assets or working across multiple languages, you won't need a separate text-on-image pipeline for each one.

When working with non-Latin scripts, include the target language or script system in your prompt. Rather than just quoting the characters, add cues like "written in Japanese kanji" or "in traditional Arabic calligraphy". This gives the model the context it needs to select the correct glyph set and rendering style.

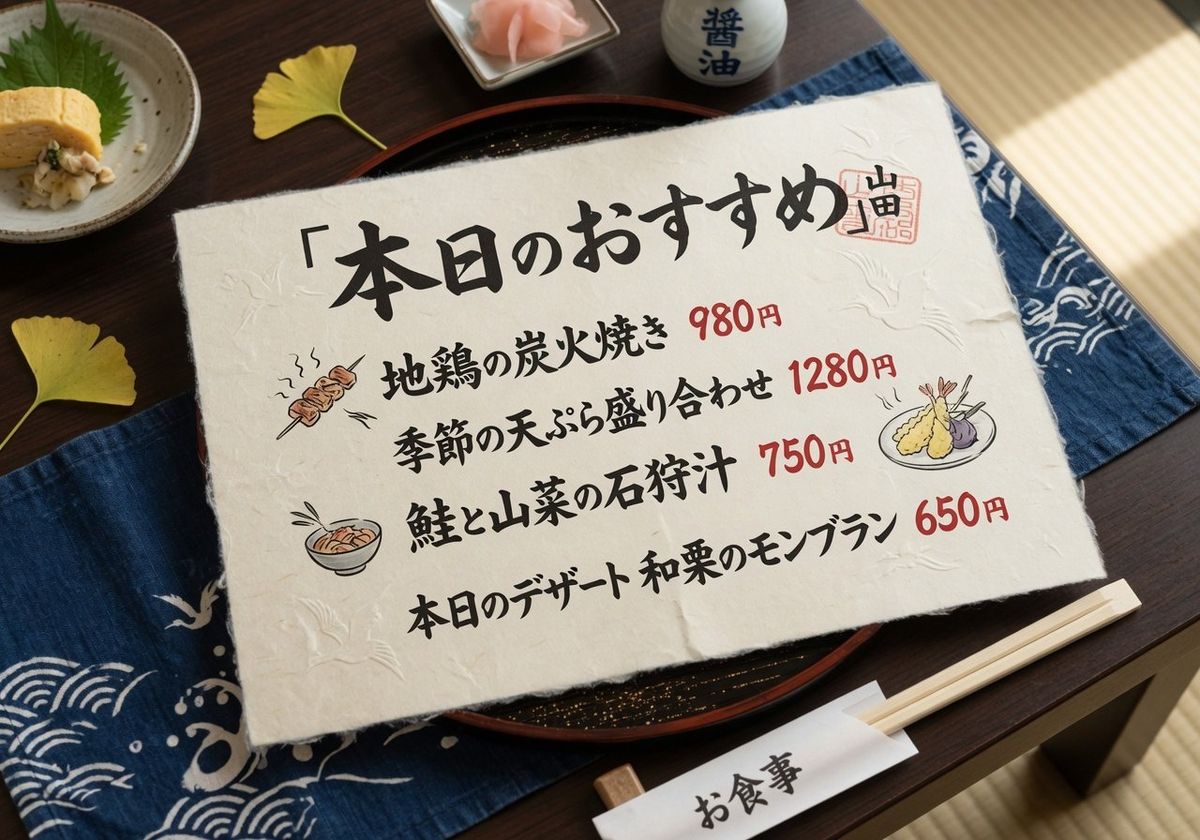

A Japanese restaurant menu card with "本日のおすすめ" written in elegant brush calligraphy on cream washi paper, traditional Japanese dining setting, soft natural light, overhead view

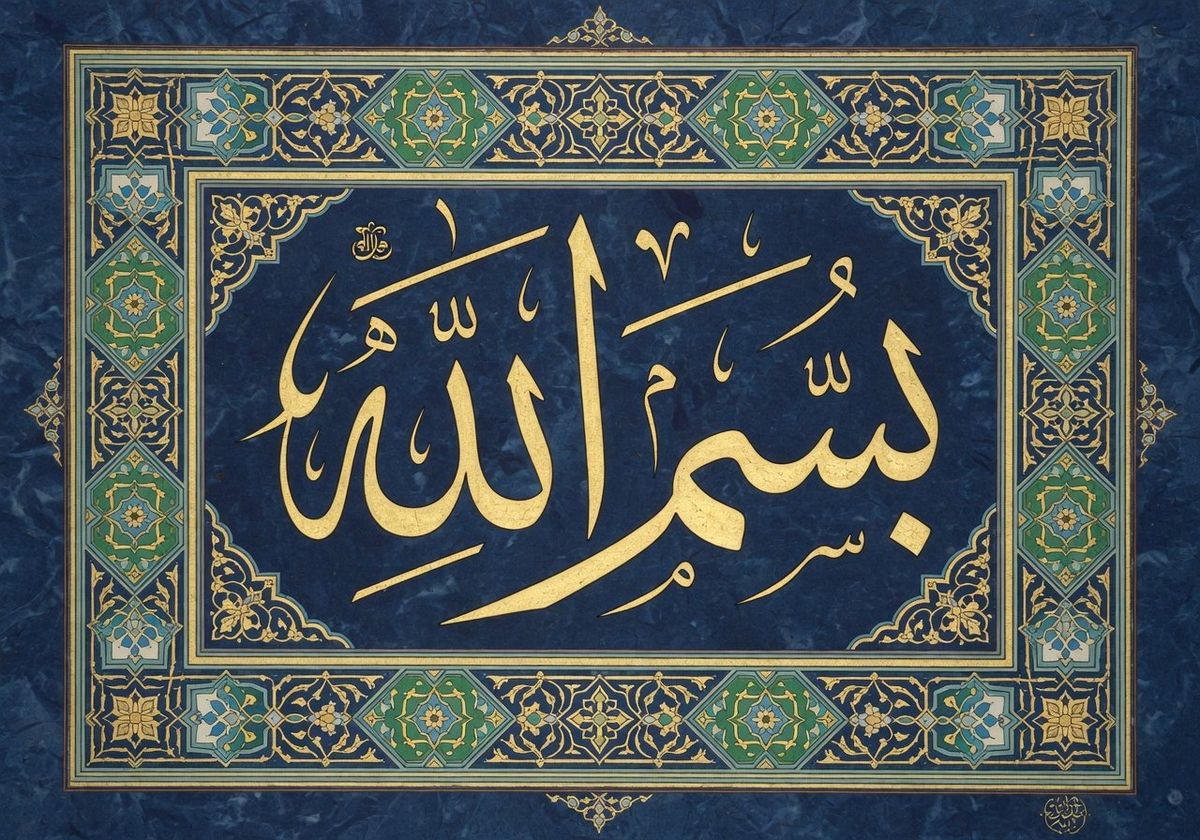

An ornate Arabic calligraphy artwork reading "بسم الله" in gold ink on a dark blue textured background, intricate geometric border patterns, traditional Islamic art style

Text rendering quality varies by script complexity. Latin scripts tend to be the most consistent, benefiting from the largest representation in training data. CJK scripts (Chinese, Japanese, Korean) work well with shorter strings and explicit script mentions. Arabic and other right-to-left scripts benefit from calligraphic style cues, since the model handles ornamental rendering more reliably than plain typographic styles for these character sets.

Accuracy decreases as text length increases, across all scripts. The effect is more noticeable with CJK characters where each glyph carries high visual complexity. For best results, aim for short strings (a title, a brand name, a phrase) rather than full paragraphs.

Practical use cases

The following examples show how to structure prompts for specific production scenarios.

Product packaging

Product packaging usually requires multiple prototyping rounds just to explore brand names and label layouts. Text-capable generation makes this much faster.

You can generate realistic product mockups with branded text and label hierarchies in seconds. The model understands the conventions of product photography and can place text on curved surfaces, labels, and packaging in ways that look realistic.

A minimalist frosted glass skincare bottle with a dropper cap, label reading "GLOW LAB" in clean sans-serif font with "Vitamin C Brightening Serum" as a subtitle, sitting on a white marble surface with soft shadows, beauty product photography, neutral tones, studio lighting

For best results with packaging, structure your prompt with three layers: the product description (material, shape, color), the text hierarchy (brand name as primary, product name as secondary), and the photography context (lighting, surface, composition style). This mirrors how a real photographer would brief a product shoot.

Image editing with text

This model also supports image editing: modifying text in existing images through natural language instructions. You can generate a base image first and iterate on the text separately, or take an existing photograph and add or change text within it.

To edit text in an existing image, pass the source image via inputs.referenceImages and describe what you want to change in positivePrompt. The model finds the text in the source, modifies it according to your instructions, and preserves everything else in the scene.

A framed minimalist motivational poster on a white brick wall, bold black uppercase text reading "DREAM BIG" on a cream background, modern interior, soft natural light from the left, clean aesthetic

Change the poster text to read "STAY CURIOUS" in teal colored letters

The editing request is straightforward. You provide the original image as a reference and describe the change in the prompt:

[

{

"taskType": "imageInference",

"taskUUID": "b2c3d4e5-f6a7-8901-bcde-f12345678901",

"model": "xai:grok-imagine@image-quality",

"positivePrompt": "Change the poster text to read \"STAY CURIOUS\" in teal colored letters",

"inputs": {

"referenceImages": [

{

"inputImage": "https://example.com/poster.jpg"

}

]

},

"width": 1024,

"height": 1024

}

]The editing prompt can be much more concise than a generation prompt. You don't need to re-describe the entire scene, just specify what should change. The model changes both the text content and the color while preserving the frame, wall texture, and positioning of the original poster.

Text editing works best when the target text area is visually distinct from its surroundings in the source image. High-contrast text on a clean background edits more reliably than text embedded in complex, textured scenes. If the source image has multiple text elements, be specific about which one to modify (e.g., "change the headline at the top" rather than just "change the text").

Tips for best results

Getting consistent text rendering comes down to giving the model clear constraints. These practices improve reliability across all the use cases above:

-

Keep text short. Strings under 10 words render more reliably than long paragraphs. This reflects how the model allocates visual attention across the composition. For longer copy, consider generating text elements separately and compositing them.

-

Always use quotation marks. This is the most impactful technique. Quotes create an unambiguous boundary between scene description and literal text content. Without them, the model will paraphrase, abbreviate, or hallucinate.

-

Describe the typography explicitly. Don't leave visual decisions to chance. Specify the font style, weight, color, and approximate size. Prompts like "in large, bold, white Helvetica-style lettering" give the model concrete targets instead of relying on its default aesthetic.

-

Lead with the scene, end with the text. Structure your prompt so the model establishes the visual context (a poster on a blue background, a neon sign on a brick wall) before it encounters the text instructions. This sequencing produces more natural integration.

-

Generate multiple variations. Use

numberResultsto produce several images at once and select the best text rendering. Even with optimal prompting, some outputs will be more precise than others. Generating 3–4 variants and picking the best one is often faster than trying to perfect a single prompt. -

Verify at full resolution. Small text errors (a wrong character, an extra pixel) are invisible in thumbnails but obvious at native resolution. Always inspect the final image at 100% zoom before using it in production.

-

Use the editing workflow for iteration. If a generated image is 90% perfect but one word is slightly off, don't regenerate from scratch. Use the image editing capability to fix just the text, preserving the composition and scene elements you already like.